Approximating Wasserstein distances with PyTorch

Many problems in machine learning deal with the idea of making two probability distributions to be as close as possible. In the simpler case where we only have observed variables $\mathbf{x}$ (say, images of cats) coming from an unknown distribution $p(\mathbf{x})$, we’d like to find a model $q(\mathbf{x}\vert\theta)$ (like a neural network) that is a good approximation of $p(\mathbf{x})$. It can be shown1 that minimizing $\text{KL}(p\Vert q)$ is equivalent to minimizing the negative log-likelihood, which is what we usually do when training a classifier, for example. In the case of the Variational Autoencoder, we want the approximate posterior to be close to some prior distribution, which we achieve, again, by minimizing the KL divergence between them.

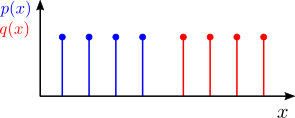

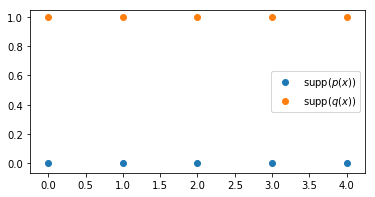

In spite of its wide use, there are some cases where the KL divergence simply can’t be applied. Consider the following discrete distributions:

The KL divergence assumes that the two distributions share the same support (that is, they are defined in the same set of points), so we can’t calculate it for the example above. This and other computational aspects motivate the search for a better suited method to calculate how different two distributions are.

In this post I will

- give a brief introduction to the optimal transport problem,

- describe the Sinkhorn iterations as an approximation to the solution,

- calculate Sinkhorn distances using PyTorch,

- describe an extension of the implementation to calculate distances of mini-batches

Update (July, 2019): I’m glad to see many people have found this post useful. Its main purpose is to introduce and illustrate the problem. To apply these ideas to large datasets and train on GPU, I highly recommend the GeomLoss library, which is optimized for this.

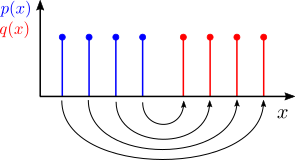

Moving probability masses

Let’s think of discrete probability distributions as point masses scattered across the space. We could measure how much effort it would it take to move points of mass from one distribution to the other, as in this example:

We can then define an alternative metric as the total effort used to move all points. We can formalize this intuitive notion by first introducing a coupling matrix $\mathbf{P}$ that represents how much probability mass from one point in the support of $p(x)$ is assigned to a point in the support of $q(x)$. For these uniform distributions we have that each point has a probability mass of $1/4$. If we order the points in the supports of the example from left to right, we can write the coupling matrix for the assignment shown above as:

\[\mathbf{P} = \begin{pmatrix} 0 & 0 & 0 & \tfrac{1}{4}\\ 0 & 0 & \tfrac{1}{4} & 0\\ 0 & \tfrac{1}{4} & 0 & 0\\ \tfrac{1}{4} & 0 & 0 & 0\\ \end{pmatrix}\]That is, mass in point 1 in the support of $p(x)$ gets assigned to point 4 in the support of $q(x)$, point 2 to point 3, and so on, as shown with the arrows above.

In order to know how much effort the assignment takes, we introduce a second matrix, known as the distance matrix. Each entry $\mathbf{C}_{ij}$ in this matrix contains the cost of moving point $i$ in the support of $p(x)$ to point $j$ in the support of $q(x)$. One way to define this cost is to use the Euclidean distance between points, also known as the ground distance. If we assume the supports for $p(x)$ and $q(x)$ are $\lbrace 1,2,3,4\rbrace$ and $\lbrace 5,6,7, 8\rbrace$, respectively, the cost matrix is:

\[\mathbf{C} = \begin{pmatrix} 4 & 5 & 6 & 7 \\ 3 & 4 & 5 & 6 \\ 2 & 3 & 4 & 5 \\ 1 & 2 & 3 & 4 \end{pmatrix}\]With these definitions, the total cost can be calculated as the Frobenius inner product between $\mathbf{P}$ and $\mathbf{C}$:

\[\langle\mathbf{C},\mathbf{P} \rangle = \sum_{ij}\mathbf{C}_{ij}\mathbf{P}_{ij}\]As you might have noticed, there are actually multiple ways to move points from one support to the other, each one yielding different costs. The one above is just one example, but we are interested in the assignment that results in the smaller cost. This is the problem of optimal transport between two discrete distributions, and its solution is the lowest cost $\text{L}_\mathbf{C}$ over all possible coupling matrices. This last condition introduces a constraint in the problem, because not any matrix is a valid coupling matrix. For a coupling matrix, all its columns must add to a vector containing the probability masses for $p(x)$, and all its rows must add to a vector with the probability masses for $q(x)$. In our example, these vectors contain 4 elements, all with a value of $1/4$. More generally, we can let these two vectors be $\mathbf{a}$ and $\mathbf{b}$, respectively, so the optimal transport problem can be written as:

\[\begin{align*} &\text{L}_\mathbf{C} = \min_{\mathbf{P}}\langle\mathbf{C},\mathbf{P}\rangle \\ \text{subject to } &\mathbf{P}\mathbf{1} = \mathbf{a} \\ &\mathbf{P}^{\top}\mathbf{1} = \mathbf{b} \end{align*}\]When the distance matrix is based on a valid distance function, the minimum cost is known as the Wasserstein distance.

There is a large body of work regarding the solution of this problem and its extensions to continuous probability distributions. For a more formal and comprehensive account, I recommend checking the book Computational Optimal Transport by Gabriel Peyré and Marco Cuturi, which is the main source for this post.

The bottom line here is that we have framed the problem of finding the distance between two distributions as finding the optimal coupling matrix. It turns out that there is a small modification that allows us to solve this problem in an iterative and differentiable way, that will work well with automatic differentiation libraries for deep learning, like PyTorch and TensorFlow.

Entropic regularization and the Sinkhorn iterations

We start by defining the entropy of a matrix:

\[H(\mathbf{P}) = -\sum_{ij}\mathbf{P}_{ij}\log\mathbf{P}_{ij}\]As in the notion of entropy of a distribution in information theory, a matrix with a low entropy will be sparser, with most of its non-zero values concentrated in a few points. Conversely, a matrix with high entropy will be smoother, with the maximum entropy achieved with a uniform distribution of values across its elements. With a regularization coefficient $\varepsilon$, we can include this in the optimal transport problem to encourage smoother coupling matrices:

\[\begin{align*} &\text{L}_\mathbf{C} = \min_{\mathbf{P}}\langle\mathbf{C},\mathbf{P}\rangle -\varepsilon H(\mathbf{P})\\ \text{subject to } &\mathbf{P}\mathbf{1} = \mathbf{a} \\ &\mathbf{P}^{\top}\mathbf{1} = \mathbf{b} \end{align*}\]By making $\varepsilon$ higher, the resulting coupling matrix will be smoother, and as $\varepsilon$ goes to zero it will be sparser, with the solution being close to that of the original optimal transport problem.

By introducing this entropic regularization, the optimization problem is made convex and can be solved iteratively using the Sinkhorn iterations2. The solution can be written in the form $\mathbf{P} = \text{diag}(\mathbf{u})\mathbf{K}\text{diag}(\mathbf{v})$, and the iterations alternate between updating $\mathbf{u}$ and $\mathbf{v}$:

\[\begin{align*} \mathbf{u}^{(k+1)} &= \frac{\mathbf{a}}{\mathbf{K}\mathbf{v}^{(k)}}\\ \mathbf{v}^{(k+1)} &= \frac{\mathbf{b}}{\mathbf{K}^{\top}\mathbf{u}^{(k+1)}} \end{align*}\]where $\mathbf{K}$ is a kernel matrix calculated with $\mathbf{C}$. Since these iterations are solving a regularized version of the original problem, the corresponding Wasserstein distance that results is sometimes called the Sinkhorn distance. The iterations form a sequence of linear operations, so for deep learning models it is straightforward to backpropagate through these iterations.

Sinkhorn iterations with PyTorch

There are additional steps that can be added to the Sinkhorn iterations in order to improve its convergence and stability properties. We can find a clean implementation of these by Gabriel Peyrè on GitHub. Let’s test it first with a simple example.

For this, we will work now with discrete uniform distributions in 2D space (instead of 1D space as above). In this case we are moving probability masses across a plane. Let’s define two simple distributions:

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

np.random.seed(42)

n_points = 5

a = np.array([[i, 0] for i in range(n_points)])

b = np.array([[i, 1] for i in range(n_points)])

plt.figure(figsize=(6, 3))

plt.scatter(a[:, 0], a[:, 1], label='supp($p(x)$)')

plt.scatter(b[:, 0], b[:, 1], label='supp($q(x)$)')

plt.legend();

We can easily see that the optimal transport corresponds to assigning each point in the support of $p(x)$ to the point right above in the support of $q(x)$. For all points, the distance is 1, and since the distributions are uniform, the mass moved per point is 1/5. Therefore, the Wasserstein distance is $5\times\tfrac{1}{5} = 1$. Let’s compute this now with the Sinkhorn iterations.

import torch

from layers import SinkhornDistance

x = torch.tensor(a, dtype=torch.float)

y = torch.tensor(b, dtype=torch.float)

sinkhorn = SinkhornDistance(eps=0.1, max_iter=100, reduction=None)

dist, P, C = sinkhorn(x, y)

print("Sinkhorn distance: {:.3f}".format(dist.item()))

Sinkhorn distance: 1.000

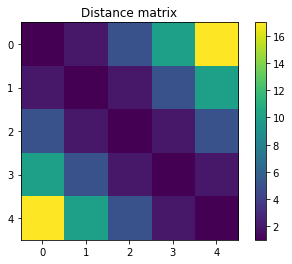

Just as we calculated. Now, it would be very interesting to check the matrices returned by the sinkhorn() method: P, the calculated coupling matrix, and C, the distance matrix. Let’s begin with the distance matrix:

plt.imshow(C)

plt.title('Distance matrix')

plt.colorbar();

The entry C[0, 0] shows how moving the mass in $(0, 0)$ to the point $(0, 1)$ incurs in a cost of 1. At the other end of the row, the entry C[0, 4] contains the cost for moving the point in $(0, 0)$ to the point in $(4, 1)$. This is the largest cost in the matrix:

since we are using the squared $\ell^2$-norm for the distance matrix.

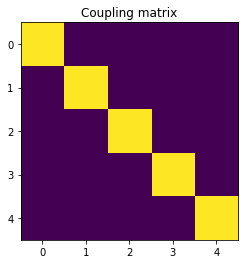

Let’s now take a look at the calculated coupling matrix:

plt.imshow(P)

plt.title('Coupling matrix');

This readily shows us how the algorithm effectively found that the optimal coupling is the same one we determined by inspection above.

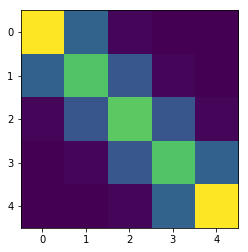

So far we have used a regularization coefficient of 0.1. What happens if we increase it to 1?

sinkhorn = SinkhornDistance(eps=1, max_iter=100, reduction=None)

dist, P, C = sinkhorn(x, y)

print("Sinkhorn distance: {:.3f}".format(dist.item()))

plt.imshow(P);

Sinkhorn distance: 1.408

As we discussed, increasing $\varepsilon$ has the effect of increasing the entropy of the coupling matrix. Here we see how $\mathbf{P}$ has become smoother, but also that there is a detrimental effect on the calculated distance, and the approximation to the true Wasserstein distance worsens.

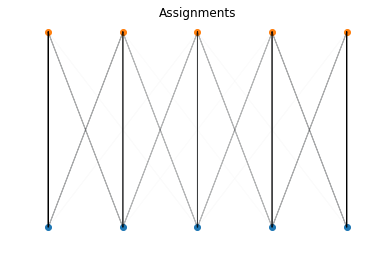

It’s also interesting to visualize the assignments in the space of the supports:

def show_assignments(a, b, P):

norm_P = P/P.max()

for i in range(a.shape[0]):

for j in range(b.shape[0]):

plt.arrow(a[i, 0], a[i, 1], b[j, 0]-a[i, 0], b[j, 1]-a[i, 1],

alpha=norm_P[i,j].item())

plt.title('Assignments')

plt.scatter(a[:, 0], a[:, 1])

plt.scatter(b[:, 0], b[:, 1])

plt.axis('off')

show_assignments(a, b, P)

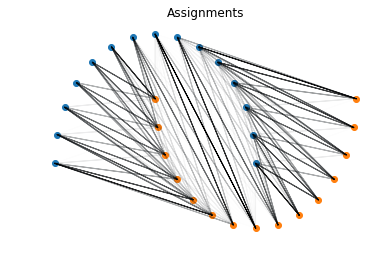

Let’s do this for a more interesting distribution: the Moons dataset.

from sklearn.datasets import make_moons

X, Y = make_moons(n_samples = 30)

a = X[Y==0]

b = X[Y==1]

x = torch.tensor(a, dtype=torch.float)

y = torch.tensor(b, dtype=torch.float)

sinkhorn = SinkhornDistance(eps=0.1, max_iter=100, reduction=None)

dist, P, C = sinkhorn(x, y)

print("Sinkhorn distance: {:.3f}".format(dist.item()))

show_assignments(a, b, P)

Sinkhorn distance: 1.714

Mini-batch Sinkhorn distances

In deep learning, we are usually interested in working with mini-batches to speed up computations. The Sinkhorn iterations can be adapted to this setting by modifying them with the additional batch dimension. After adding this change to the implementation (code here), we can compute Sinkhorn distances for multiple distributions in a mini-batch. Let’s do it here for another example that is easy to verify.

We will compute Sinkhorn distances for 4 pairs of uniform distributions with 5 support points, separated vertically by 1 (as above), 2, 3, and 4 units. This way, the Wasserstein distances between them will be 1, 4, 9 and 16, respectively.

n = 5

batch_size = 4

a = np.array([[[i, 0] for i in range(n)] for b in range(batch_size)])

b = np.array([[[i, b + 1] for i in range(n)] for b in range(batch_size)])

# Wrap with torch tensors

x = torch.tensor(a, dtype=torch.float)

y = torch.tensor(b, dtype=torch.float)

sinkhorn = SinkhornDistance(eps=0.1, max_iter=100, reduction=None)

dist, P, C = sinkhorn(x, y)

print("Sinkhorn distances: ", dist)

Sinkhorn distances: tensor([ 1.0001, 4.0001, 9.0000, 16.0000])

It works! Note also that now P and C are 3D tensors, containing the coupling and distance matrices for each pair of distributions in the mini-batch:

print('P.shape = {}'.format(P.shape))

print('C.shape = {}'.format(C.shape))

P.shape = torch.Size([4, 5, 5])

C.shape = torch.Size([4, 5, 5])

Conclusion

The notion of the Wasserstein distance between distributions and its calculation via the Sinkhorn iterations open up many possibilities. The framework not only offers an alternative to distances like the KL divergence, but provides more flexibility during modeling, as we are no longer forced to choose a particular parametric distribution. The iterations can be executed efficiently on GPU and are fully differentiable, making it a good choice for deep learning. These advantages have been exploited in recent works in machine learning, such as autoencoders3,4 and metric embedding5,6, making it promising for further applications in the field.

Acknowledgments

I’d like to thank Thomas Kipf for introducing me to the problem of optimal transport, insightful discussions and comments on this post; and Gabriel Peyrè for making code resources available online.

References

- See C. Bishop, "Pattern Recognition and Machine Learning", section 1.6.1. ↩

- Cuturi, Marco. "Sinkhorn distances: Lightspeed computation of optimal transport." Advances in neural information processing systems, 2013. ↩

- Tolstikhin, Ilya, et al. "Wasserstein auto-encoders." arXiv preprint arXiv:1711.01558, 2017.

- Patrini, Giorgio, et al. "Sinkhorn AutoEncoders." arXiv preprint arXiv:1810.01118, 2018.

- Courty, Nicolas, Rémi Flamary, and Mélanie Ducoffe. "Learning wasserstein embeddings." arXiv preprint arXiv:1710.07457, 2017.

- Charlie Frogner, Farzaneh Mirzazadeh, Justin Solomon. "Learning Embeddings into Entropic Wasserstein Spaces." ICLR (2019).